Context to Confidence: The Next Phase of Ambiguous Techniques Research

MITRE CTID’s latest ambiguous techniques research turns context into confidence with minimum telemetry requirements and a confidence scoring …

By Tabitha Colter, Shiri Bendelac, Lily Wong, Christina Liaghati and Keith Manville • September 30, 2024

The Secure AI research project is a collaborative effort between MITRE ATLAS™ and the Center for Threat-Informed Defense (Center) designed to facilitate rapid communication of evolving vulnerabilities in the AI security space through effective incident sharing. This research effort will boost community knowledge of threats to Artificial Intelligence-enabled systems. With AI technology and adoption advancing exponentially across critical domains, new threat vectors and vulnerabilities are emerging every day, and they require novel security procedures.

In partnership with AttackIQ, BlueRock, Booz Allen Hamilton, CATO Networks, Citigroup, CrowdStrike, FS-ISAC, Fujitsu, HCA Healthcare, HiddenLayer, Intel Corporation, JPMorgan Chase Bank, Microsoft Corporation, Standard Chartered, and Verizon Business, we deployed a system for improved AI incident capture and added new case studies, techniques, and mitigations to the ATLAS knowledge base. These case studies illustrate novel AI attack procedures that organizations should be aware of and defend against.

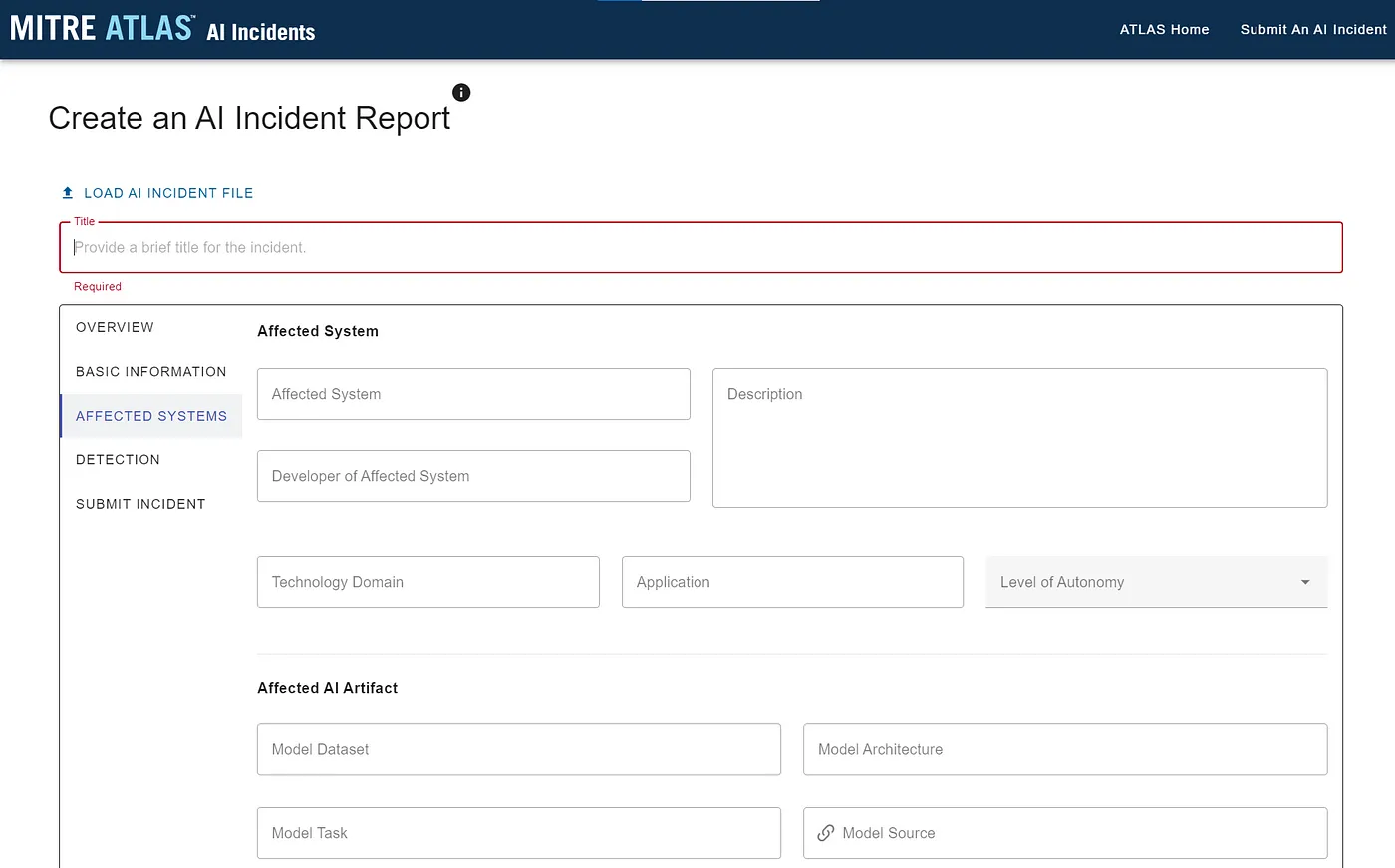

Organizations across government, industry, academia, and nonprofit sectors continue to incorporate AI components into their software systems. Commensurately, incidents involving these systems will increasingly occur. Standardized and rapid information sharing about these AI incidents will empower the entire community to improve the collective defense of such systems and prevent external harms. Sharing information about the affected AI artifacts, affected system and users, attacker, and incident detection can be vital to improving those defenses. For this reason, we focused on aligning the capture of information for AI incident expression with existing cybersecurity standards, using a STIX 2.1 bundle as our basis.

This project developed the AI Incident Sharing initiative as a mechanism for a community of trusted contributors to both receive and share protected and anonymized data on real world AI incidents that are occurring across operational AI-enabled systems. Just as MITRE operates CVE for the cyber community or ASIAS for the aviation community, this AI Incident Sharing initiative will serve as the safe space for AI assurance incident sharing at the intersection of the industry, government, and extended community. In capturing and carefully distributing the appropriately sanitized and technically focused AI incident data, this effort aims to enable more data driven risk intelligence and analysis at scale across the community.

The first version of the AI Incident Sharing website launched in September at https://ai-incidents.mitre.org/.

Since AI-enabled systems are susceptible to both traditional cybersecurity vulnerabilities and new attacks that exploit unique characteristics of AI, we knew mapping these new threats would be a crucial aspect of securing organizations against those unique and emergent attack surfaces. For this release, we identified a swath of new case studies based on real-world attacks or realistic red teaming exercises that are designed to inform organizations about the latest threats to AI-enabled systems. We highlight one case study below and the rest are published as part of ATLAS’s most recent update:

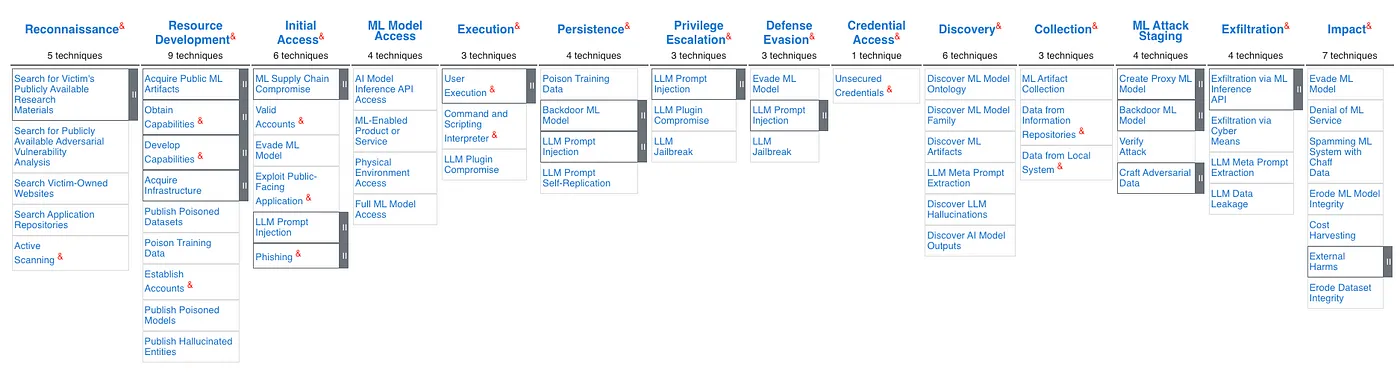

Through our research on these case studies, we added the following new techniques to the ATLAS matrix:

The Secure AI research participants also collaborated to identify other cutting-edge threats against AI-enabled systems such as:

Identifying novel vulnerabilities and attack methods is an important first step in improving the security of our AI-enabled systems. However, the increased adoption of AI within existing systems means that the mitigation of those vulnerabilities is critical to organizational success across the AI lifecycle. That’s why the ATLAS knowledge base also includes mitigations as a list of security concepts and classes of technologies that can be used to prevent a (sub)technique from being successfully executed against an AI-enabled system. In addition to the identification of new case studies and attack techniques, we also collaborated on the identification of new mitigations that can help minimize or prevent harms within AI-enabled systems. These included:

As the ground truth of adversarial TTPs for traditional cybersecurity, MITRE ATT&CK® is already widely adopted within the security community. ATLAS is modeled after and complementary to ATT&CK in order to raise the awareness of rapidly evolving vulnerabilities of AI-enabled systems as they extend beyond cyber. To continue facilitating the improved understanding of these vulnerabilities and how they relate to and differ from TTPs seen within ATT&CK, we have synchronized updates between the two knowledge bases. When ATT&CK releases a new version, ATLAS will update in kind.

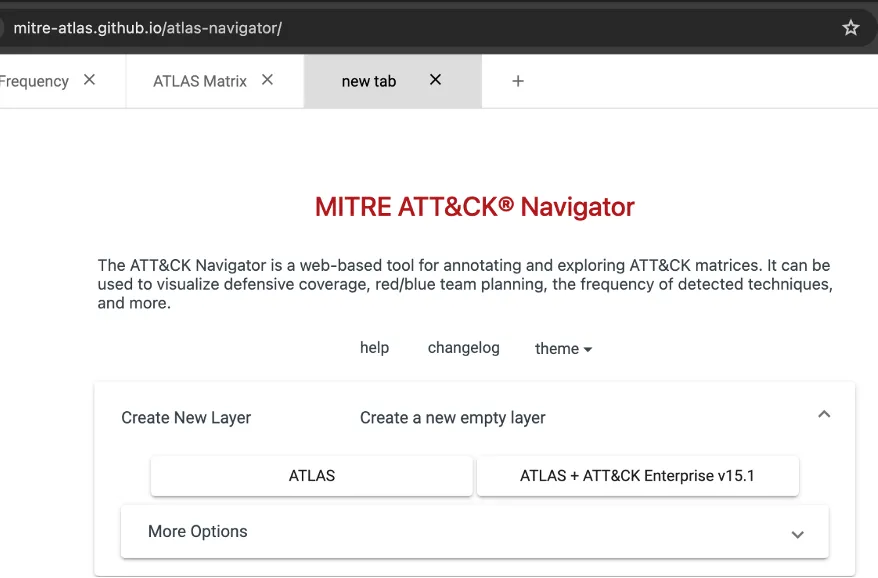

The ATLAS STIX data has now been updated to include ATT&CK Enterprise v15.1 and the ATLAS matrix has now been expressed as a STIX 2.1 bundle following the ATT&CK data model. That ATLAS STIX 2.1 data has now been combined with the ATT&CK Enterprise data and can be used as domain data within the ATLAS Navigator.

Our collective research has provided actionable documentation of novel threat vectors for AI-enabled systems, steps for mitigating those novel threats, and improved incident capture for AI incidents. But we’re not done. AI security needs evolve as new AI applications are adopted. We welcome community feedback and additional suggestions for other real-world attacks that can be included in future work as we advance community awareness of threats to AI-enabled systems. There are several ways you can get involved with this and other projects to continue advancing AI security and threat-informed defense:

Contact us at ctid@mitre.org for any questions about this and future AI security work.

© 2024 The MITRE Corporation. Approved for Public Release. ALL RIGHTS RESERVED. Document number

CT0123.

MITRE CTID’s latest ambiguous techniques research turns context into confidence with minimum telemetry requirements and a confidence scoring …

Threat-informed defense changes the game on the adversary. Threat-informed defenders read their adversaries’ playbooks and then orchestrate a …

MITRE ATLAS™ analyzed OpenClaw incidents that showcase how AI-first ecosystems introduce new exploit execution paths. OpenClaw is unique because …